Since launching our generative AI platform providing only a few brief months in the past, we’ve seen, heard, and skilled intense and accelerated AI innovation, with outstanding breakthroughs. As a long-time machine studying advocate and trade chief, I’ve witnessed many such breakthroughs, completely represented by the regular pleasure round ChatGPT, launched virtually a yr in the past.

And simply as ecosystems thrive with organic variety, the AI ecosystem advantages from a number of suppliers. Interoperability and system flexibility have all the time been key to mitigating threat – in order that organizations can adapt and proceed to ship worth. However the unprecedented velocity of evolution with generative AI has made optionality a crucial functionality.

The market is altering so quickly that there are not any positive bets – immediately or within the close to future. This can be a assertion that we’ve heard echoed by our prospects and one of many core philosophies that underpinned lots of the progressive new generative AI capabilities introduced in our current Fall Launch.

Relying too closely upon anybody AI supplier might pose a threat as charges of innovation are disrupted. Already, there are over 180+ completely different open supply LLM fashions. The tempo of change is evolving a lot quicker than groups can apply it.

DataRobot’s philosophy has been that organizations have to construct flexibility into their generative AI technique based mostly on efficiency, robustness, prices, and adequacy for the particular LLM job being deployed.

As with all applied sciences, many LLMs include commerce offs or are extra tailor-made to particular duties. Some LLMs might excel at specific pure language operations like textual content summarization, present extra numerous textual content technology, and even be cheaper to function. Because of this, many LLMs could be best-in-class in numerous however helpful methods. A tech stack that gives flexibility to pick or mix these choices ensures organizations maximize AI worth in a cost-efficient method.

DataRobot operates as an open, unified intelligence layer that lets organizations examine and choose the generative AI parts which might be proper for them. This interoperability results in higher generative AI outputs, improves operational continuity, and reduces single-provider dependencies.

With such a method, operational processes stay unaffected if, say, a supplier is experiencing inner disruption. Plus, prices could be managed extra effectively by enabling organizations to make cost-performance tradeoffs round their LLMs.

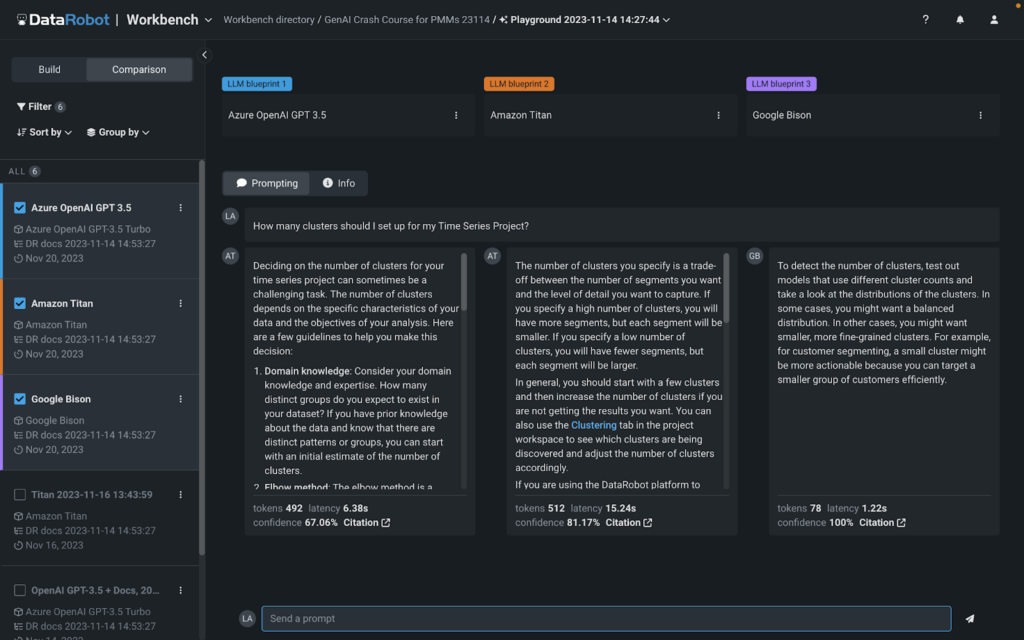

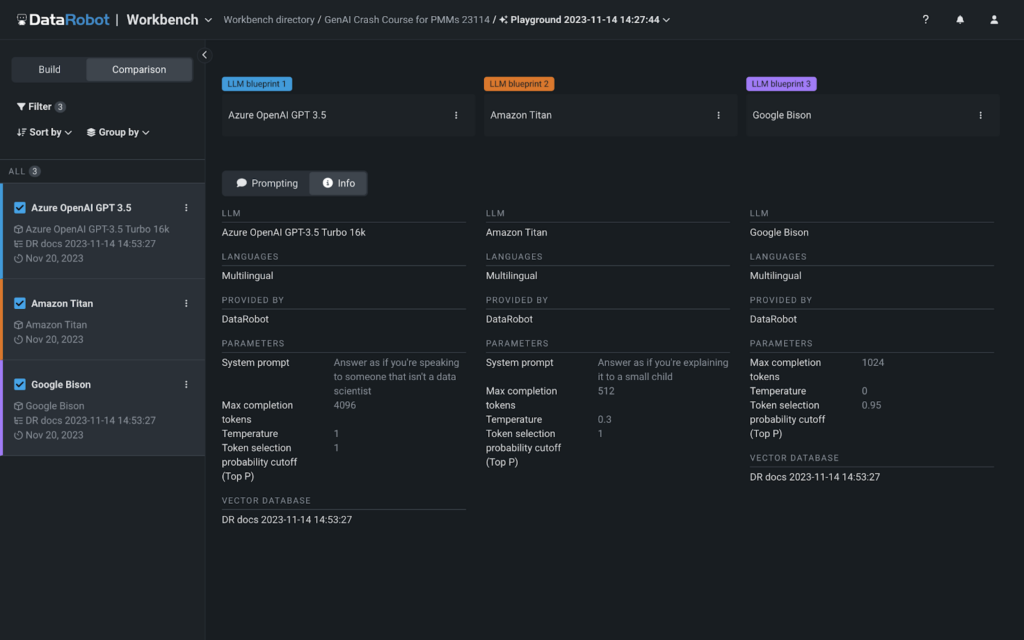

Throughout our Fall Launch, we introduced our new multi-provider LLM Playground. The primary-of-its-kind visible interface gives you with built-in entry to Google Cloud Vertex AI, Azure OpenAI, and Amazon Bedrock fashions to simply examine and experiment with completely different generative AI ‘recipes.’ You should utilize any of the built-in LLMs in our playground or deliver your individual. Entry to those LLMs is on the market out-of-the-box throughout experimentation, so there are not any further steps wanted to start out constructing GenAI options in DataRobot.

With our new LLM Playground, we’ve made it straightforward to attempt, check, and examine completely different GenAI “recipes” when it comes to type/tone, value, and relevance. We’ve made it straightforward to guage any mixture of foundational mannequin, vector database, chunking technique, and prompting technique. You are able to do this whether or not you like to construct with the platform UI or utilizing a pocket book. Having the LLM playground makes it straightforward so that you can flip backwards and forwards from code to visualizing your experiments aspect by aspect.

With DataRobot, it’s also possible to hot-swap underlying parts (like LLMs) with out breaking manufacturing, in case your group’s wants change or the market evolves. This not solely helps you to calibrate your generative AI options to your actual necessities, but additionally ensures you preserve technical autonomy with the entire better of breed parts proper at your fingertips.

You possibly can see beneath precisely how straightforward it’s to match completely different generative AI ‘recipes’ with our LLM Playground.

When you’ve chosen the best ’recipe’ for you, you possibly can rapidly and simply transfer it, your vector database, and prompting methods into manufacturing. As soon as in manufacturing, you get full end-to-end generative AI lineage, monitoring, and reporting.

With DataRobot’s generative AI providing, organizations can simply select the best instruments for the job, safely lengthen their inner knowledge to LLMs, whereas additionally measuring outputs for toxicity, truthfulness, and price amongst different KPIs. We prefer to say, “we’re not constructing LLMs, we’re fixing the boldness downside for generative AI.”

The generative AI ecosystem is complicated – and altering day by day. At DataRobot, we guarantee that you’ve got a versatile and resilient method – consider it as an insurance coverage coverage and safeguards in opposition to stagnation in an ever-evolving technological panorama, making certain each knowledge scientists’ agility and CIOs’ peace of thoughts. As a result of the fact is that a corporation’s technique shouldn’t be constrained to a single supplier’s world view, fee of innovation, or inner turmoil. It’s about constructing resilience and velocity to evolve your group’s generative AI technique so that you could adapt because the market evolves – which it might probably rapidly do!

You possibly can study extra about how else we’re fixing the ‘confidence downside’ by watching our Fall Launch occasion on-demand.

In regards to the writer

Ted Kwartler is the Subject CTO at DataRobot. Ted units product technique for explainable and moral makes use of of information expertise. Ted brings distinctive insights and expertise using knowledge, enterprise acumen and ethics to his present and former positions at Liberty Mutual Insurance coverage and Amazon. Along with having 4 DataCamp programs, he teaches graduate programs on the Harvard Extension Faculty and is the writer of “Textual content Mining in Apply with R.” Ted is an advisor to the US Authorities Bureau of Financial Affairs, sitting on a Congressionally mandated committee known as the “Advisory Committee for Information for Proof Constructing” advocating for data-driven insurance policies.