Pleasure Buolamwini‘s AI analysis was attracting consideration years earlier than she acquired her Ph.D. from the MIT Media Lab in 2022. As a graduate pupil, she made waves with a 2016 TED discuss about algorithmic bias that has acquired greater than 1.6 million views thus far. Within the discuss, Buolamwini, who’s Black, confirmed that customary facial detection programs didn’t acknowledge her face except she placed on a white masks. Through the discuss, she additionally brandished a protect emblazoned with the emblem of her new group, the Algorithmic Justice League, which she mentioned would battle for individuals harmed by AI programs, individuals she would later come to name the excoded.

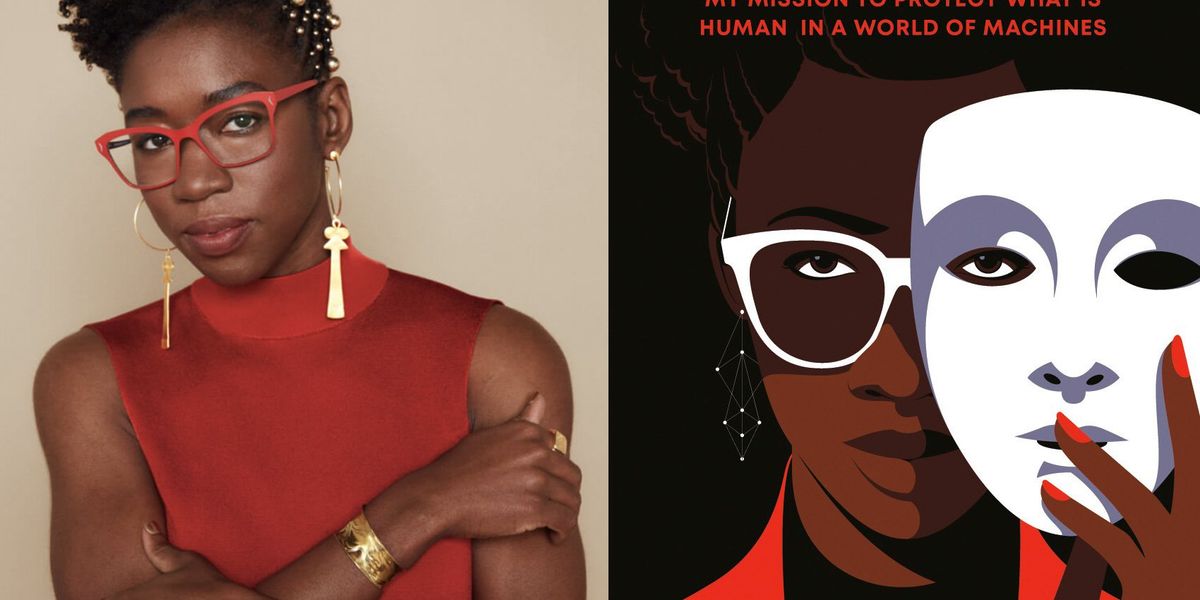

In her new ebook, Unmasking AI: My Mission to Defend What Is Human in a World of Machines, Buolamwini describes her personal awakenings to the clear and current risks of at the moment’s AI. She explains her analysis on facial recognition programs and the Gender Shades analysis challenge, during which she confirmed that industrial gender classification programs persistently misclassified dark-skinned ladies. She additionally narrates her stratospheric rise—within the years since her TED discuss, she has offered on the World Financial Discussion board, testified earlier than Congress, and took part in President Biden’s roundtable on AI.

Whereas the ebook is an attention-grabbing learn on a autobiographical degree, it additionally incorporates helpful prompts for AI researchers who’re able to query their assumptions. She reminds engineers that default settings aren’t impartial, that handy datasets could also be rife with moral and authorized issues, and that benchmarks aren’t all the time assessing the appropriate issues. By way of e-mail, she answered IEEE Spectrum‘s questions on how you can be a principled AI researcher and how you can change the established order.

Probably the most attention-grabbing elements of the ebook for me was your detailed description of how you probably did the analysis that turned Gender Shades: the way you found out an information assortment technique that felt moral to you, struggled with the inherent subjectivity in devising a classification scheme, did the labeling labor your self, and so forth. It appeared to me like the other of the Silicon Valley “transfer quick and break issues” ethos. Are you able to think about a world during which each AI researcher is so scrupulous? What would it not take to get to such a state of affairs?

Pleasure Buolamwini: After I was incomes my educational levels and studying to code, I didn’t have examples of moral knowledge assortment. Principally if the information have been out there on-line it was there for the taking. It may be tough to think about one other method of doing issues, when you by no means see another pathway. I do imagine there’s a world the place extra AI researchers and practitioners train extra warning with data-collection actions, due to the engineers and researchers who attain out to the Algorithmic Justice League on the lookout for a greater method. Change begins with dialog, and we’re having necessary conversations at the moment about knowledge provenance, classification programs, and AI harms that after I began this work in 2016 have been typically seen as insignificant.

What can engineers do in the event that they’re involved about algorithmic bias and different points relating to AI ethics, however they work for a typical massive tech firm? The form of place the place no person questions the usage of handy datasets or asks how the information was collected and whether or not there are issues with consent or bias? The place they’re anticipated to provide outcomes that measure up in opposition to customary benchmarks? The place the alternatives appear to be: Associate with the established order or discover a new job?

Buolamwini: I can’t stress the significance of documentation. In conducting algorithmic audits and approaching well-known tech firms with the outcomes, one difficulty that got here up time and time once more was the shortage of inside consciousness concerning the limitations of the AI programs that have been being deployed. I do imagine adopting instruments like datasheets for datasets and mannequin playing cards for fashions, approaches that present a possibility to see the information used to coach AI fashions and the efficiency of these AI fashions in varied contexts is a crucial place to begin.

Simply as necessary can also be acknowledging the gaps, so AI instruments aren’t offered as working in a common method when they’re optimized for only a particular context. These approaches can present how sturdy or not an AI system is. Then the query turns into, Is the corporate prepared to launch a system with the restrictions documented or are they prepared to return and make enhancements.

It may be useful to not view AI ethics individually from growing sturdy and resilient AI programs. In case your instrument doesn’t work as nicely on ladies or individuals of coloration, you might be at a drawback in comparison with firms who create instruments that work nicely for a wide range of demographics. In case your AI instruments generate dangerous stereotypes or hate speech you might be in danger for reputational injury that may impede an organization’s skill to recruit essential expertise, safe future prospects, or achieve follow-on funding. In case you undertake AI instruments that discriminate in opposition to protected courses for core areas like hiring, you threat litigation for violating antidiscrimination legal guidelines. If AI instruments you undertake or create use knowledge that violates copyright protections, you open your self as much as litigation. And with extra policymakers trying to regulate AI, firms that ignore points or algorithmic bias and AI discrimination could find yourself dealing with expensive penalties that might have been prevented with extra forethought.

“It may be tough to think about one other method of doing issues, when you by no means see another pathway.” —Pleasure Buolamwini, Algorithmic Justice League

You write that “the selection to cease is a viable and essential choice” and say that we are able to reverse course even on AI instruments which have already been adopted. Would you prefer to see a course reversal on at the moment’s tremendously fashionable generative AI instruments, together with chatbots like ChatGPT and picture turbines like Midjourney? Do you assume that’s a possible risk?

Buolamwini: Fb (now Meta) deleted a billion faceprints across the time of a [US] $650 million settlement after they confronted allegations of amassing face knowledge to coach AI fashions with out the expressed consent of customers. Clearview AI stopped providing providers in quite a lot of Canadian provinces after investigations into their data-collection course of have been challenged. These actions present that when there may be resistance and scrutiny there may be change.

You describe the way you welcomed the AI Invoice of Rights as an “affirmative imaginative and prescient” for the sorts of protections wanted to protect civil rights within the age of AI. That doc was a nonbinding set of tips for the federal authorities because it started to consider AI rules. Only a few weeks in the past, President Biden issued an govt order on AI that adopted up on most of the concepts within the Invoice of Rights. Are you happy with the chief order?

Buolamwini: The EO [executive order] on AI is a welcomed growth as governments take extra steps towards stopping dangerous makes use of of AI programs, so extra individuals can profit from the promise of AI. I commend the EO for centering the values of the AI Invoice of Rights together with safety from algorithmic discrimination and the necessity for efficient AI programs. Too typically AI instruments are adopted primarily based on hype with out seeing if the programs themselves are match for goal.

You’re dismissive of issues about AI changing into superintelligent and posing an existential threat to our species, and write that “present AI programs with demonstrated harms are extra harmful than hypothetical ‘sentient’ AI programs as a result of they’re actual.” I bear in mind a tweet from final June during which you talked about individuals involved with existential threat and mentioned that you simply “see room for strategic cooperation” with them. Do you continue to really feel that method? What would possibly that strategic cooperation appear to be?

Buolamwini: The “x-risk” I’m involved about, which I speak about within the ebook, is the x-risk of being excoded—that’s, being harmed by AI programs. I’m involved with deadly autonomous weapons and giving AI programs the flexibility to make kill choices. I’m involved with the methods during which AI programs can be utilized to kill individuals slowly via lack of entry to sufficient well being care, housing, and financial alternative.

I don’t assume you make change on this planet by solely speaking to individuals who agree with you. A number of the work with AJL has been partaking with stakeholders with completely different viewpoints and ideologies to higher perceive the incentives and issues which might be driving them. The current U.Ok. AI Security Summit is an instance of a strategic cooperation the place a wide range of stakeholders convened to discover safeguards that may be put in place on near-term AI dangers in addition to rising threats.

As a part of the Unmasking AI ebook tour, Sam Altman and I lately had a dialog on the way forward for AI the place we mentioned our various viewpoints in addition to discovered frequent floor: specifically that firms can’t be left to manipulate themselves in the case of stopping AI harms. I imagine these sorts of discussions present alternatives to transcend incendiary headlines. When Sam was speaking about AI enabling humanity to be higher—a body we see so typically with the creation of AI instruments—I requested which people will profit. What occurs when the digital divide turns into an AI chasm? In asking these questions and bringing in marginalized views, my intention is to problem all the AI ecosystem to be extra sturdy in our evaluation and therefore much less dangerous within the processes we create and programs we deploy.

What’s subsequent for the Algorithmic Justice League?

Buolamwini: AJL will proceed to boost public consciousness about particular harms that AI programs produce, steps we are able to put in place to handle these harms, and proceed to construct out our harms reporting platform which serves as an early-warning mechanism for rising AI threats. We’ll proceed to guard what’s human in a world of machines by advocating for civil rights, biometric rights, and artistic rights as AI continues to evolve. Our newest marketing campaign is round TSA use of facial recognition which you’ll be able to be taught extra about through fly.ajl.org.

Take into consideration the state of AI at the moment, encompassing analysis, industrial exercise, public discourse, and rules. The place are you on a scale of 1 to 10, if 1 is one thing alongside the traces of outraged/horrified/depressed and 10 is hopeful?

Buolamwini: I might provide a much less quantitative measure and as a substitute provide a poem that higher captures my sentiments. I’m general hopeful, as a result of my experiences since my fateful encounter with a white masks and a face-tracking system years in the past has proven me change is feasible.

THE EXCODED

To the Excoded

Resisting and revealing the lie

That we should settle for

The give up of our faces

The harvesting of our knowledge

The plunder of our traces

We have fun your braveness

No Silence

No Consent

You present the trail to algorithmic justice require a league

A sisterhood, a neighborhood,

Hallway gatherings

Sharpies and posters

Coalitions Petitions Testimonies, Letters

Analysis and potlucks

Dancing and music

Everybody enjoying a job to orchestrate change

To the excoded and freedom fighters all over the world

Persisting and prevailing in opposition to

algorithms of oppression

automating inequality

via weapons of math destruction

we Stand with you in gratitude

You reveal the individuals have a voice and a alternative.

When defiant melodies harmonize to raise

human life, dignity, and rights.

The victory is ours.

From Your Web site Articles

Associated Articles Across the Internet